Overview

The Stats MA at Berkeley is a two or three semester program, with about 1/3rd of students electing to do a third semester. In order to complete the program, you must complete a capstone project and the comprehensive exams. There is a “thesis option” but only a couple students per year will choose this option. The program has an industry focus, with no option to transition into the PhD program upon completion. As such, there is no overlap between the masters students and the PhD students in the coursework. If you are able to make connections separately to find a suitable RA position at Berkeley, you will be able to do research, but it is not a part of the Stats MA program. My cohort had ~50 students, with around 40% being from mainland China, a smaller share of students from India, and international students from several other countries. Of the students holding American passports, a few were from the east coast, and the rest were California natives.

During my time in the program, the head of the department assured us that anyone who is hoping to be a GSI in the second and third semesters will be assigned to a position, usually for undergraduate lower division courses. I also was a GSI in the first semester, but you need to obtain special permission to do that, and I worked for the math department, and applied separately through their application process instead of a guaranteed assignment. Then in my second semester I was a GSI for an upper division statistics course.

In the Summer preceeding the program, the department offered a “Summer bridge” two week preparation for the program, with bootcamps in R, Python, Stats, Probability, and some review of calculus and linear algebra. The R and Python sections were very useful, and the others were not as much, but YMMV.

Although in the years before I arrived, the Stats MA coordinator had a strong positive influence on the students’ preparation for industry, she left just before my program began. Our class suffered the lack of career counseling in conjunction with the market crash of 2020, so I cannot speak to the counterfactual career preparation offered by the program. However, if you sift carefully through the alumni list, you can use LinkedIn to find some reasonable trends in career placements, as well as the relative start dates compared to graduation month.

In terms of expected salary upon graduation, you can check the Data Scientist levels.fyi page for industry standards, and benchmark with your current years of experience. From the modest sample I have collected from colleagues as they shared about negotiating offers and talking numbers, 100k is very attainable if you’re staying in a HCOL (high cost of living) area like the bay or another major city. Of course, if you are able to land a Data Scientist role at a well funded fast growing startup or at a large company, you may be able to achieve well over 200k total compensation. This is highly idiosyncratic at the student level and will depend heavily on your ability to network, communicate your skill set, and negotiate. Total compensation is also highly idiosyncratic on the firm and even team within firm level, where some hiring managers will have a headcount to fill and a specific need for their project that you might be more or less compatible with. Remember also that you’re competing with top students from top programs from the entire world to land a top job in the bay, not just your fellow classmates at Cal.

Update: The UC Berkeley Statistics department has since updated their website to include much more information than before

How can you prepare for the program?

The stats MA program combines probability, statistics, linear algebra, computer science, and some elements of pure mathematics all together in one. Unless you have a double major in Stats and CS with a minor in math and a full upper division course in linear algebra, you’ll have to learn some things for the first time or brush up on other forgotten material.

Coding / Computer Science:

- If you don’t have coding experience, consider practicing R, SQL, Unix, python, and basics of HTML before the semester begins. You’ll also need to know a bit of R markdown and LaTeX, but that’s easier to learn quickly.

- R - check out swirl! Go through as many modules as possible. I wish I had done this earlier, and become more fluent before class started.

- SQL, HTML, and Python- check out w3schools, and do the first few lessons.

- LaTeX - get an account on overleaf.com. It’s the most user friendly and easy to share. You don’t have to worry about a fancy compiler or IDE.

- git - you will need to understand git and github, and learn to use it from the terminal.

- Unix - this is the language of the terminal or bash shell aka command line prompt. This is very useful to understand and will be necessary in the STAT 243 assignments.

Probability and Statistics:

- If you don’t have a strong probability and statistics background, go through the book Mathematical Data Analysis by John Rice (a quick google search will yield a free pdf version). It’s what the Berkeley upper division classes use to prepare students for 201A and 201B. The classes are called “Stats 134 - Probability” and “Stats 135 - Statistics”. If you can do most of the problems in John Rice, you’ll be in a good position to begin 201A and 201B. In my case, I had only taken a single statistics class which didn’t cover much of what was in John Rice, so the learning curve was incredibly steep. I spent most of my time studying undergrad textbooks to catch up to what was going on in class.

Linear Algebra:

Coming into the program, I had several semesters of abstract algebra and linear algebra under my belt from undergrad as a math major, and it was a huge help. As time has gone on in the program, it has become more and more valuable. Students who are not strong in linear algebra hated the end of Stats 243, where it was very heavy on linear algebra, and are completely lost in Stats 230 - linear models. If you have the bandwidth, consider auditing a lower division class, check out David Lay’s undergraduate level book (Math 54 at Cal), or Stephen Friedberg’s upper division level linear algebra text.

Coursework:

Fall Semester:

Stats 243 - Statistical Computing

- Taught by Professor Chris Paciorek

- Focuses on using R, unix, and (more minimally)SQL to carry out statistical computing.

Stats 201A - Probability

- Taught by Professor Aditya Guntaboyina

- Here’s a pdf of the lecture notes from 2018: Link to Document

- Every part of those lecture notes are necessary for the second semester coursework you do. Any part that is unclear will be something you have to re-learn in the second semester.

Stats 201B - Statistics

- Taught by Professor Haiyan Huang

- Follows material similar to Professor Larry Wasserman’s “All Of Statistics” textbook. Homework and exam problems are often appropriated directly from Professor Wasserman’s materials.

Spring Semester:

Stats 222 - Capstone project course

- Taught by Thomas and Libor

- Textbook: “Elements of Statistical Learning” by Hastie, Tibshirani, Friedman

- Historically, this class is only offered in the evenings, so RIP your Tuesday and Thursday evenings.

- In the lectures, a very broad view of different statistical models is presented.

- I highly recommend making your own schedule for reading relevant resources to understand the material.

- I haven’t utilized the office hours offered by the professors, but I have colleagues who have reported positive experiences.

Stats 230 - Linear Models

- Taught by professor Peng Ding

- Utilizes material from 201A and 201B, as well as a great deal of intermediate to advanced linear algebra content to build a foundation for linear models. An alternative name for the class could be “Regression” or “Methods of Regression”.

- Peng is extremely knowledgeable and dedicated to this course. I’ve sent several emails to ask more granular linear algebra questions or brief clarifications, and he is very quick to respond.

Qualifying elective of your choice

- Most students took an elective in the stats department, which is a standard lecture based class with homeworks due every one or two weeks.

- Alternatively, you can select a project based machine learning course in IEOR, CS, INFO, or other qualifying department.

- Note: If your units don’t add to 12 (because the project classes often are only 3 units, while Linear models and capstone are 4 each), you’ll need to add a seminar for one unit. This may be one hour per week where a guest lecturer will come and talk about a specific area of their research. Most of the content will go straight over your head, but it’s good experience encountering new and novel research. Also, if the lecture is a “job talk” where the guest speaker is hoping to get a position at Cal in the stats department, you might get to watch their research get put on blast by the more senior stats department faculty.

Evans Hall:

- Your home for the next 2-3 semesters.

- The third floor has the statistics department, and the 4th floor has the master’s lounge.

- The elevators are slow, and the main three don’t go to the ground floor; you’ll have to get off at floor 1 and walk down the stairs.

- Out of the main three elevators, the shortest distance to the lounge after arriving on the fourth floor is as follows: walking out of the east-most elevator, turn right; walking out of the middle elevator or the west elevator: turn left. A rigorous counting of floor tiles was used to make this conclusion, but a formal proof is left as an exercise to the reader. The most inefficient path to the lounge is the one that includes a lengthy discussion in the hallway about which path is optimal.

Comprehensive Exam:

- The exam was administered on January 25th 2020, and we received our results by email on February 26th.

- The material was almost identical (or exactly identical) to problem sets, midterm questions, or other practice problems we had seen in the past. It was around the difficulty of a medium-range homework problem, with nothing much easier or much more difficult.

GSI Appointments:

- GSI appointments come in different time commitment categories: 25% and 50%, corresponding to roughly 10 hrs per week and 20 hrs per week, respectively.

- Statistics MA students who are interested in being a GSI are usually offered appointments in the 2nd and 3rd semesters, and if you want to be a GSI in the first semester, you need to get special permission from the stats department.

- I held two different GSI appointments: Fall 2019 for Math 1B (second year calculus), and Spring 2020 (Stats 135, upper-division statistics).

- In general, being a GSI means you have 8 hours of in-person work. Four hours of instruction and four office hours, or six hours of instruction and two office hours.

- To apply, reach out to the proper coordinator for the department you want to be a GSI in, and they will send you a schedule of classes for the upcoming semester and ask you to list your preferences. Then, you will receive an offer and go through the proper paperwork and paper-signing as well as an orientation before beginning your work.

- Some appointments require a significant amount of work, and others are more relaxed. I was fortunate to have two different appointments with two different professors who, despite having polar opposite personalities, gave a similarly relaxed working schedule, which I appreciated very much in the midst of the challenging course load in the stats MA.

- Time consuming activities: typing up worksheets, quizzes, exams in LaTeX, and grading on gradescope.

Other Tips:

- Find a study group early on, and make a routine of collaborating together. Most people in the program need to work with others in order to finish the assignments. This is also important for networking later as you’re looking for jobs and learning about what’s happening in industry.

- Some of my colleagues went to all the career fairs in Fall semester, but only one or two were able to get a job offer or internship that early. Waiting until second semester is totally reasonable.

- Find a place to live that is close to Evans, even if it means paying more. Every hour of the day is valuable when you’re trying to keep up with the fast paced learning environment of the program, and commute time can make that even more challenging.

Self-Guided Learning:

Many assignments will require you to learn something new and apply it immediately. For instance, an assignment in Stats 243 early on was to do the following:

- something called “web-scraping” to obtain data, (requires knowledge of html & CSS)

- manipulate it in Rstudio using R and python (maybe using SQL like commands to subset / retrieve data)

- generate plots, charts, and statistical analyses (in R with particular libraries like ggplot2 or matplotlib in python)

- produce your results in a .Rmd file (using three “languages”: R, R markdown, and LaTeX where necessary)

- and then push the results to a remote github repository (using command line Unix).

The assignment was due 10 days after it was announced. The description of the task itself was two pages long. For some students, it meant learning up to four or five languages, two or three new programs/applications, and several packages or libraries across those languages. This is part of the learning process. It begins as something that seems entirely unreasonable, and then at the end of the ten days, it all seems obvious and you can’t imagine calling library() before you call install.packages(), and you make fun of each other when you don’t have quotes around string objects or your for loop has no conditional statement causing a runtime error.

Much of the material will be learned on your own, on stack overflow, and through others in the program with more expertise. Immediately several distinct names of my classmates come to mind if I have questions of different categories: stats questions (Fitch), ggplot2 and data visualization questions (Mirella) , LaTeX questions (Kyle), and Linear Algebra (myself!). Practice being a resource and asking for help: that’s what industry is like!

Departmental Info & Communication

You will receive many emails from the department:

- Job opportunities for PhD students (these do not apply to you).

- Updates about a seminar entitled “Multivariate extensions of isotonic regression and total variation denoising via entire monotonicity and Hardy-Krause variation”. What? You don’t know any of those words? That’s okay. I don’t either, and I’m almost done with the program.

- Notices about the scf and how it’s being updated or maintained.

- The weekly “wind down” from the Phd students & social committee about where the social hangout event of the week will be.

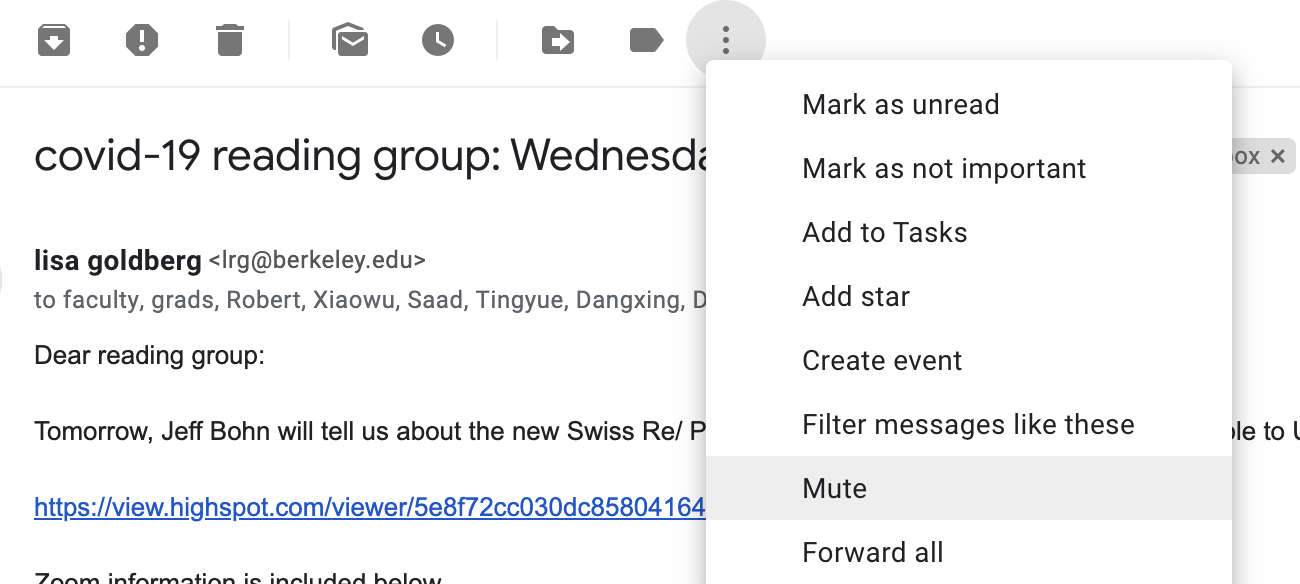

Muting Email Chains

Pro-tip: if you know an email chain will continue, but don’t want to get updates and notifications, gmail supports “mute”. Many times a person in our department will be awarded a grant or have something named after them and a dozen or so people will reply all, which is a wonderful way to celebrate an important accomplishment! But it’s also distracting if you’re trying to work or waiting for more important / relevant emails for group projects or interviews.

FAQ:

When a new admit emails me with questions, I’ll answer them and add them here.

Question: Did you enjoy your programme?

- I enjoyed the program, yes!

Question: What were elements you enjoyed the most?

- It was rigorous and provided a significant theoretical backdrop to understand statistical methods. I enjoyed the fast paced learning environment and the comfortability we had with various tools even after a short time.

Question: what did you not like?

- Because the program is very interdisciplinary, there were elements that were completely out of my league, but reasonable for other students, and vice versa. It would have been nice to have more supplementary materials to bridge the gap. I’ve curated most of the resources on my own or after much research and asking around, which was tiresome.

Question: How much work experience do students have (I have worked in quant finance for three years)? What percentage of students have work experience?

- On average, I would say about a year of experience is average, with about half of the cohort coming straight out of undergrad.

Question: From the module pages and your websites the modules seem to provide a very deep understanding of fundamental statistical models. Is that true? How much does the degree cover more modern approaches of statistical learning?

- In 201B with Professor Huang, she mentions many more modern statistical methods and the capstone course gives more modern methods. Together with Professor Ding in Linear Models, you’ll have a pretty solid overview of the timeline up to the present day of how methods were developed and utilized. The answer to this question also heavily depends on your definition of “modern”, since the field is changing rapidly.

Question: How much application is included in the degree? From what I understand from the module page and your website, the lectures are theoretical but the coursework will make you apply the learned models on real world data. How did you experience it?

- The first semester is almost entirely theoretical, with Stats 243 having applied elements. The second semester is where you do more application and work on projects. I highly recommend doing a project on your own or choosing an ML project that has been done and looking at examples before the second semester. EDA, model selection, fitting, estimation. Even a brief small project to get the hang of it will put so much of second semester in context as you experience it. Becoming more familiar with Python, R, and SQL is an excellent usage of your winter break (see my “programming” section in the “industry” tab).

Question: Could you tell me a bit more about the capstone project? Should I think of it as a master thesis? Could you tell me about 1-2 example projects?

- To finish the MA, you can choose either a traditional research thesis, or the capstone project. 99% of students (data purely anecdotal) choose the capstone. The capstone together with your comprehensive exam qualify you to receive your degree.

- There are 10 example projects that Thomas and Libor outline to offer as options, or you can choose your own if your proposal is accepted. One project was about analyzing airbnb rental descriptions using natural language processing to find out if location is truly the only thing that matters, or if there is a “more rentable” set of description features. Another one is Alzheimer’s disease brain imaging analysis to find patterns for early detection. Another is geospatial analysis of taxi cab data from Manhattan to find information about optimal fares or pathing for drivers.

Question: How do do you see the job prospects of graduate? What are roles that students tend to go for? In my case, given my previous work experience, I would not want to join a corporate graduate programme but join as an experienced hire.

- From what I have seen, some students go directly into a full data-scientist position or into a statistical consulting capacity, while others start as entry level data analysts, or apply to internships rather than full time positions. Your post graduation path is dictated mostly by your own job search abilities, connections, and prior work experience. Many of my classmates have several years of experience, and so also won’t be applying to entry level roles.

Question: When you say that the program is very interdisciplinary, do you mean in terms of background of students taking it? I am asking because from my understanding, the degree sounds fairly focused on statistics, compared to other Stats/DS programs that have more elements of computer science in it for example.

- I suppose I mean both that it has students from lots of different backgrounds, and incorporates many different disciplines. It is less CS heavy than other programs, and leaves most of the learning about programming and implementation for you to do on your own.

Question: As far as my third question on “modern” statistical methods is concerned, I tried to ask if the degree is focusing more on fundamental statistics only or will also teach you commonly applied statistical methods in the industry such as dimensionality reduction techniques, ML, NLP, computer vision, etc. I have seen some other graduate programmes (e.g. NYU’s MS in DS programme) that seems a bit more focused on ML applications. Berkeley’s programme is of course a statistics programme and to that extend focuses more on fundamental statistical methods. However, I am trying to understand how much of the application of these in ML is covered. I see that the module Statistical Learning Theory seems to cover these methods but am wondering how much else they are covered (you mentioned 201B and 230A also cover some of these methods). Would you think it is a fair assumption that this programme provides a rigorous understanding of statistics and includes some elements of ML but leaves the application of these for the capstone project and coursework?

- Your last sentence is accurate, I would say.

Question: Speaking about electives, which elective did you take and which one would you recommend? For example, would you recommend taking the ML focused module from the stats department (241A) or would you take the module offered by the computer science department?

- Personally, in order, I think that the CS courses will give you a bigger bang for your buck, and then a rigorous class from the stats department, then other departments which have more project based classes can be interesting, but I wouldn’t recommend unless it was similar enough to the field you intend to enter. I think for you it would make sense to find an economics or finance elective with sufficient programming or stats to qualify.

Lastly, I am also holding offers from NYU, Imperial College London and some other European programs. From your insights into the field, how would you say Berkeley ranks up against NYU and Imperial? From my understanding Berkeley is really top notch in the field of statistics. However, I wanted to ask what you view is and how you see NYU and Imperial in the field of statistics/ml/ds.

- I can’t speak to comparing your different offers, simply because there are too many unknowns. However, I would say that positioning yourself optimally for whatever you plan to do afterwards should be your number one priority. I’m hoping to work and live in the bay after I graduate, so Berkeley was a natural fit, as well as being the rank 2 university for Statistics. If finance is your main goal, it may be less convenient to build your network in Berkeley for 2-3 semesters, but if you’re single (unmarried) and mobile, it will be less of a strain, and one year goes very quickly, if you choose to do 2 semesters. You have a lot of resources, and the networking is good at a high rank institution, but sometimes the environment or instruction can suffer due to MA students being less of a priority than research, PhD students, etc. But I think this exists in academia more broadly to different degrees.

Question: On the point on modern / ML techniques, do you feel that these are sufficiently covered as part of the programme (lectures+coursework+captstone project) so that you would be able to apply them? My objective of this degree is to a) deepen my understanding of statistic sand b) learn new tools that I have not learned in my econometrics classes / professional experience, such as Neural Networks, NLP, support vector machines, etc. From how you describe the coursework (web scraping + analysis) and the capstone project (airbnb NLP project, Alzheimer computer vision project), it sounds like you do learn these tools as part of the degree. Do you see it the same way? Do you feel there are some things you did not learn (compared to your expectations or compared to a DS degree?)

- Yes, I think they are sufficiently covered. Additionally, the second semester is very much tailorable to fit what you want to get out of it, so I’m sure you can pick a method or framework you want to improve in or specialize with and incorporate it to your coursework. There are some things I didn’t learn, but I could have learned any of the things you mentioned; I just chose other things, and I didn’t fill up my schedule quite to the brim; I worked throughout the program, GSI’d and also I train competitively in Olympic Weightlifting, along with being married, so the program isn’t the sole focus for me like it is for most students.

Question: Lastly, you mentioned studying for 3 semesters. Is this common? It does of course sound nice to spend 3 semesters instead of 2 semesters studying, however this would mean a 50% increase in (already high) cost of the degree…

- There are a handful (maybe a dozen or more) students who do the third semester. I’m contemplating it myself in the current status of things, but leaning toward finishing in May. I think for you a third semester wouldn’t make much sense, since you have experience and know what you want to do. However, for students who want a more comprehensive study of certain ML methods, or want to spread out their coursework in order to be more thorough, the third semester can be a strategic means of maximizing the value of their time at Berkeley.

(continued…)

How did you like the UCB Stats MA? I enjoyed it, but the last few months being remote were rough; do not recommend doing a remote program, especially because your connections are a huge part of getting a masters.

I have been going back and forth between whether I should do a 2-year program or this one. I currently work as a Data Analyst and want to move into Data Scientist positions. Could you speak a little to how this MA helps prepare you for a Data Scientist position and how it is perceived by the industry (do employers see this as equivalent to a traditional Stats MS in your experience)? How the program is viewed is based on ranking and degree; I didn’t have any trouble because it was a 1-year program. Also, I didn’t need to specify, so it’s possible some employers assumed it was a 2 year program. There’s the option to do a third semester so for some folks its 1.5 years. If I were you, I would choose a 2 year program over UCB only if the school is a name brand like Berkeley.

Any advice if you were in my position (UCI Math BS, worked as a Data Analyst for a year now, plan on doing a Masters this Fall, and getting a job as a Data Scientist)? You’ll be seen as not having enough CS experience, so I would try to do as much CS as possible in your current work. Try to build your data engineering skills and understand the infrastructure and software because the industry is currently a bit more partial to CS folks than stats folks if you want to be a Data Scientist as opposed to an analyst. Would you be able to get promoted to DS at your current company before you started a program? That would be one of the best things for your resume.

Any technical advice (skills to focus on to move from Analyst to Scientist, whether it’s statistical concepts or coding languages or beyond)? Try to find a project that works well at several scales, and build it out over the course of several months using cloud computing resources. If it costs money per month to maintain those cloud resources, just count that as tuition. I would recommend the GCP courses (which are very cheap) or AWS certs if you want to get a feel for what it’s actually like in industry with enterprise tools. I would advise you to take Andrew Ng’s ML course (I think it’s offered for free online) and make sure you brush up on your Linear Algebra, in case your 121A and B were taken earlier on in your UCI math career (I took mine winter & spring quarter of freshman year, so it was rusty, lol) I also recommend John Rice’s book for brushing up on stats and probability.

How tight-knit/ collaborative is the MA cohort? Did you interact frequently with the other MA students in classes/studying/etc.? The cohort is as tightly knit as you want it to be. In my cohort there were several subgroups that as far as I can tell were extremely close. In the subgroup that I spent time with, we basically got to school around the same time, studied between classes together, then stayed in the lounge at night until we finished the assignments.

I understand that graduate degrees are meant to be focused on studying, but was there time to do other activities such as joining clubs/events/exploring San Fransisco? Or is the program rigorous enough that I should expect to focus all my time on my studies? It depends. If your background is strong, you will probably be able to do well in the program and also do several activities outside. In my case, I lifted weights at a local gym, worked as a GSI and was phasing out of my pre-masters job, and found time here and there to explore. I knew early on I wasn’t going to be the top of my class, because there are students who have no desire to do anything outside of class and have stronger more recent coursework from top institutions. But nobody really asks about your GPA if you’re going into industry after you graduate, so I would focus more on skill building and getting familiar with technologies anyway. To answer your first question directly, anyone in my cohort who wanted to find time to join clubs/explore the city found that time. To your second question, the program is rigorous enough that the top students focused much of their time on their studies and did not explore or have as much “fun”, but I don’t know if they would have if the program were not rigorous.